I’ve written previously about how to turn on time-taken values in web logs for IIS, Tomcat, and Apache. Armed with time-taken data, you can identify which are the slow pages in your system and decide where to focus your performance tuning efforts.

Slow pages fall into two basic categories – pages that are inherently slow, and pages that are vulnerable to being slow. If a page is inherently slow, performance will drag no matter how heavily loaded the system is or what time of day it is accessed. If it is vulnerable to being slow, it means that certain conditions can lead to slowness, such as low memory on a database, high CPU utilization on a server, etc. In this case, while there might be opportunities to make the page less vulnerable, like query tuning, the actual problem may lie elsewhere.

Visualizing the Data

Graphing your performance data is great to visually learn about your performance issues. If a page is inherently slow, you would expect to see slow page response times spread relatively evenly throughout the day. If your page’s performance is being affected by external factors, like database utilization, you would expect page response times to increase with load or cluster around a specific point in time.

The best place to start is to load your data up into excel and create a chart. Excel’s charts are limited to 32,000 data points, so it is very possible that you will not be able to load as much data as you want. In this case, you can just grab a percentage of your data. While I’m sure there is a way to do this in excel, I find it much easier to use a quick awk script to grab every nth row. For example, to grab every 3rd row, you would run:

- cat ex101201.log | awk ‘// { n++; if ((n % 3) == 0) { print $0 } }’ > ex101201_sampled.txt

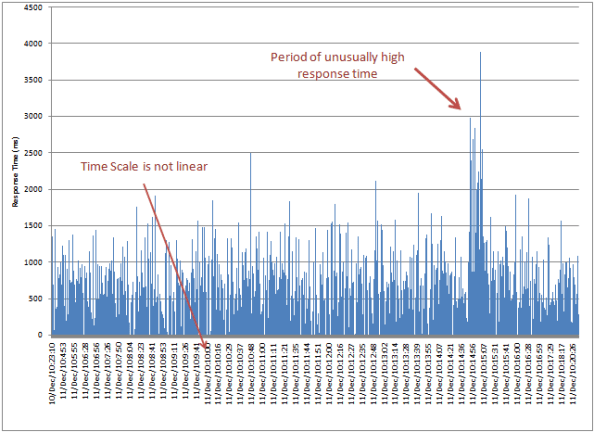

Once you get it into excel, select a bar chart, and voila:

You can quickly get a sense of what your page response time looks like – in this example, response times of between 500 ms and 1500 ms are “normal”. You also immediately see that there was a period where response times shot up much higher than “normal”.

The limitation of this graph is that the x-axis of the graph has no actual correspondence to time. While many sites have low request volumes during the wee hours and heavier volumes during business hours, these types of graphs just plot one point right after the other. All sense of request volume over time is lost, and the duration of events is distorted.

Building a Scatter Plot

Excel is smart enough to properly interpret time data, provided you give it to it in a format it understands. Sadly, most web log files record their date stamps in ways that Excel just doesn’t interpret correctly. For example, Tomcat will generate date-times like this:

- [11/Dec/2010:00:17:16 -0500]

If you use a copy/replace or sed script or other technique to reformat them into a more standard “mm/dd/yyyy hh:mm:ss” type of format, Excel will automatically re-interpret them as date-time values.

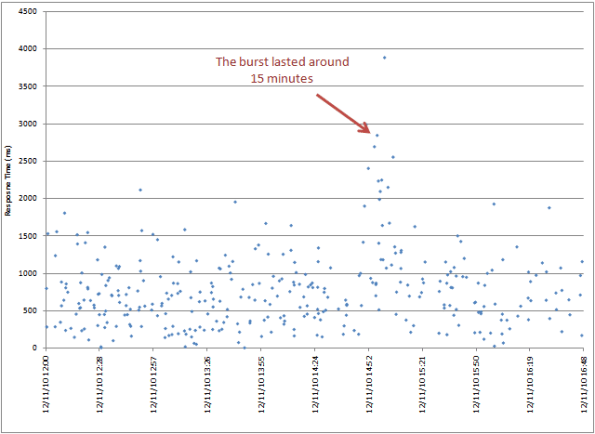

Once you do this, you can insert the data as a scatter plot, with event time on the x-axis and response time on the y-axis.

Now a number of things jump out. First, you can see the density of responses. Traffic is clearly very light outside of business hours, but during this period, it still stays within the 500-1500 ms range, so there is not a strong correlation between load and performance. You can also see that the “burst” of slower performance in the afternoon was extremely tightly bound to a very short time window.

Zoom In

With the x-axis now evenly spread across time, you can change the settings on the x-axis to zoom in on particularly times of interest. To do this, right click on the x-axis and choose “Format Axis…”

Change the Minimum and Maximum from “Auto” to “Fixed”, and then adjust the values. Internally, Excel represents each day as a number, with the time of day as the value after the decimal point. So, the period between 0.5 and 0.7 is essentially from noon until a little before 5:00 pm.

You can now see fine details for this chunk of time.

Hello,

Thank you the article.

Another information which may interest a reader : 0.042 (meaning of the life, universe & everything…)

If one want to configure Horizontal Axis with an interval of 30 minutes user Major Unit = 0.021

If one want to configure Horizontal Axis with an interval of 15 minutes user Major Unit = 0.0105

If one want to configure Horizontal Axis with an interval of 5 minutes user Major Unit = 0.0035

If one want to configure Horizontal Axis with an interval of 1 minute user Major Unit = 0.0007

a+, =)

-=Finiderire=-